6. Classification II: evaluation & tuning#

6.1. Overview#

This chapter continues the introduction to predictive modeling through classification. While the previous chapter covered training and data preprocessing, this chapter focuses on how to evaluate the performance of a classifier, as well as how to improve the classifier (where possible) to maximize its accuracy.

6.2. Chapter learning objectives#

By the end of the chapter, readers will be able to do the following:

Describe what training, validation, and test data sets are and how they are used in classification.

Split data into training, validation, and test data sets.

Describe what a random seed is and its importance in reproducible data analysis.

Set the random seed in Python using the

numpy.random.seedfunction.Describe and interpret accuracy, precision, recall, and confusion matrices.

Evaluate classification accuracy, precision, and recall in Python using a test set, a single validation set, and cross-validation.

Produce a confusion matrix in Python.

Choose the number of neighbors in a K-nearest neighbors classifier by maximizing estimated cross-validation accuracy.

Describe underfitting and overfitting, and relate it to the number of neighbors in K-nearest neighbors classification.

Describe the advantages and disadvantages of the K-nearest neighbors classification algorithm.

6.3. Evaluating performance#

Sometimes our classifier might make the wrong prediction. A classifier does not need to be right 100% of the time to be useful, though we don’t want the classifier to make too many wrong predictions. How do we measure how “good” our classifier is? Let’s revisit the breast cancer images data [Street et al., 1993] and think about how our classifier will be used in practice. A biopsy will be performed on a new patient’s tumor, the resulting image will be analyzed, and the classifier will be asked to decide whether the tumor is benign or malignant. The key word here is new: our classifier is “good” if it provides accurate predictions on data not seen during training, as this implies that it has actually learned about the relationship between the predictor variables and response variable, as opposed to simply memorizing the labels of individual training data examples. But then, how can we evaluate our classifier without visiting the hospital to collect more tumor images?

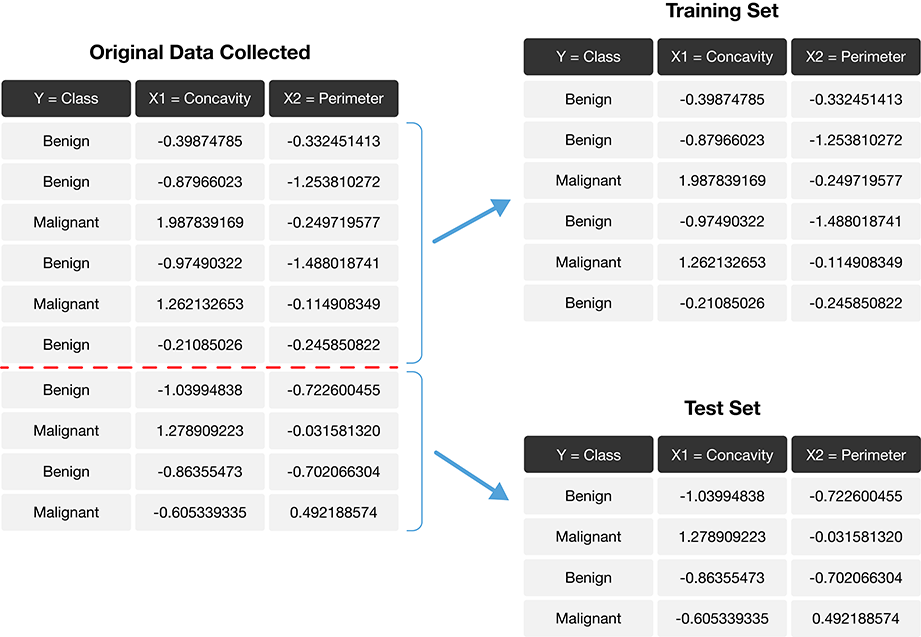

The trick is to split the data into a training set and test set (Fig. 6.1) and use only the training set when building the classifier. Then, to evaluate the performance of the classifier, we first set aside the labels from the test set, and then use the classifier to predict the labels in the test set. If our predictions match the actual labels for the observations in the test set, then we have some confidence that our classifier might also accurately predict the class labels for new observations without known class labels.

Note

If there were a golden rule of machine learning, it might be this: you cannot use the test data to build the model! If you do, the model gets to “see” the test data in advance, making it look more accurate than it really is. Imagine how bad it would be to overestimate your classifier’s accuracy when predicting whether a patient’s tumor is malignant or benign!

Fig. 6.1 Splitting the data into training and testing sets.#

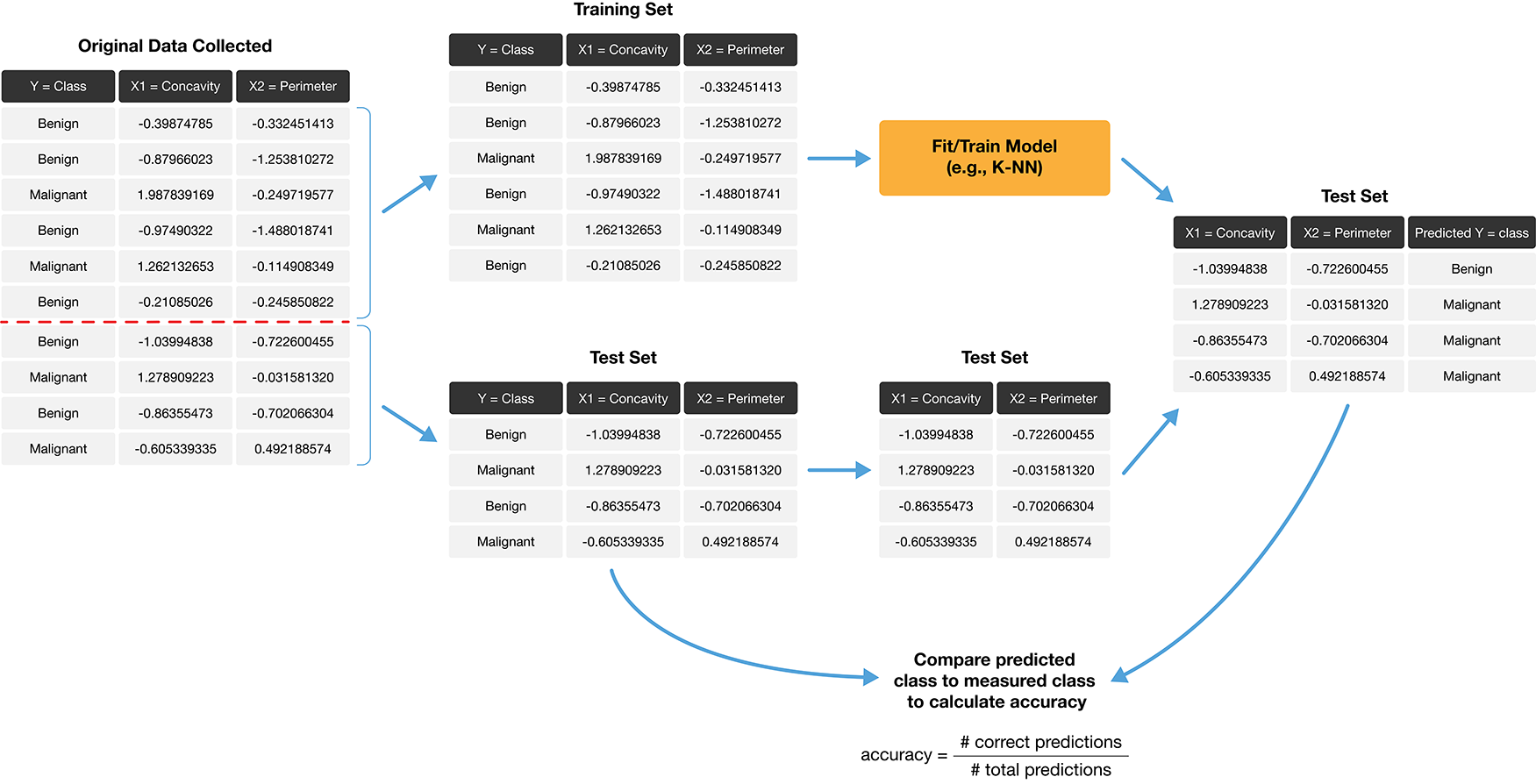

How exactly can we assess how well our predictions match the actual labels for the observations in the test set? One way we can do this is to calculate the prediction accuracy. This is the fraction of examples for which the classifier made the correct prediction. To calculate this, we divide the number of correct predictions by the number of predictions made. The process for assessing if our predictions match the actual labels in the test set is illustrated in Fig. 6.2.

Fig. 6.2 Process for splitting the data and finding the prediction accuracy.#

Accuracy is a convenient, general-purpose way to summarize the performance of a classifier with a single number. But prediction accuracy by itself does not tell the whole story. In particular, accuracy alone only tells us how often the classifier makes mistakes in general, but does not tell us anything about the kinds of mistakes the classifier makes. A more comprehensive view of performance can be obtained by additionally examining the confusion matrix. The confusion matrix shows how many test set labels of each type are predicted correctly and incorrectly, which gives us more detail about the kinds of mistakes the classifier tends to make. Table 6.1 shows an example of what a confusion matrix might look like for the tumor image data with a test set of 65 observations.

Predicted Malignant |

Predicted Benign |

|

|---|---|---|

Actually Malignant |

1 |

3 |

Actually Benign |

4 |

57 |

In the example in Table 6.1, we see that there was 1 malignant observation that was correctly classified as malignant (top left corner), and 57 benign observations that were correctly classified as benign (bottom right corner). However, we can also see that the classifier made some mistakes: it classified 3 malignant observations as benign, and 4 benign observations as malignant. The accuracy of this classifier is roughly 89%, given by the formula

But we can also see that the classifier only identified 1 out of 4 total malignant tumors; in other words, it misclassified 75% of the malignant cases present in the data set! In this example, misclassifying a malignant tumor is a potentially disastrous error, since it may lead to a patient who requires treatment not receiving it. Since we are particularly interested in identifying malignant cases, this classifier would likely be unacceptable even with an accuracy of 89%.

Focusing more on one label than the other is common in classification problems. In such cases, we typically refer to the label we are more interested in identifying as the positive label, and the other as the negative label. In the tumor example, we would refer to malignant observations as positive, and benign observations as negative. We can then use the following terms to talk about the four kinds of prediction that the classifier can make, corresponding to the four entries in the confusion matrix:

True Positive: A malignant observation that was classified as malignant (top left in Table 6.1).

False Positive: A benign observation that was classified as malignant (bottom left in Table 6.1).

True Negative: A benign observation that was classified as benign (bottom right in Table 6.1).

False Negative: A malignant observation that was classified as benign (top right in Table 6.1).

A perfect classifier would have zero false negatives and false positives (and therefore, 100% accuracy). However, classifiers in practice will almost always make some errors. So you should think about which kinds of error are most important in your application, and use the confusion matrix to quantify and report them. Two commonly used metrics that we can compute using the confusion matrix are the precision and recall of the classifier. These are often reported together with accuracy. Precision quantifies how many of the positive predictions the classifier made were actually positive. Intuitively, we would like a classifier to have a high precision: for a classifier with high precision, if the classifier reports that a new observation is positive, we can trust that the new observation is indeed positive. We can compute the precision of a classifier using the entries in the confusion matrix, with the formula

Recall quantifies how many of the positive observations in the test set were identified as positive. Intuitively, we would like a classifier to have a high recall: for a classifier with high recall, if there is a positive observation in the test data, we can trust that the classifier will find it. We can also compute the recall of the classifier using the entries in the confusion matrix, with the formula

In the example presented in Table 6.1, we have that the precision and recall are

So even with an accuracy of 89%, the precision and recall of the classifier were both relatively low. For this data analysis context, recall is particularly important: if someone has a malignant tumor, we certainly want to identify it. A recall of just 25% would likely be unacceptable!

Note

It is difficult to achieve both high precision and high recall at the same time; models with high precision tend to have low recall and vice versa. As an example, we can easily make a classifier that has perfect recall: just always guess positive! This classifier will of course find every positive observation in the test set, but it will make lots of false positive predictions along the way and have low precision. Similarly, we can easily make a classifier that has perfect precision: never guess positive! This classifier will never incorrectly identify an obsevation as positive, but it will make a lot of false negative predictions along the way. In fact, this classifier will have 0% recall! Of course, most real classifiers fall somewhere in between these two extremes. But these examples serve to show that in settings where one of the classes is of interest (i.e., there is a positive label), there is a trade-off between precision and recall that one has to make when designing a classifier.

6.4. Randomness and seeds#

Beginning in this chapter, our data analyses will often involve the use of randomness. We use randomness any time we need to make a decision in our analysis that needs to be fair, unbiased, and not influenced by human input. For example, in this chapter, we need to split a data set into a training set and test set to evaluate our classifier. We certainly do not want to choose how to split the data ourselves by hand, as we want to avoid accidentally influencing the result of the evaluation. So instead, we let Python randomly split the data. In future chapters we will use randomness in many other ways, e.g., to help us select a small subset of data from a larger data set, to pick groupings of data, and more.

However, the use of randomness runs counter to one of the main tenets of good data analysis practice: reproducibility. Recall that a reproducible analysis produces the same result each time it is run; if we include randomness in the analysis, would we not get a different result each time? The trick is that in Python—and other programming languages—randomness is not actually random! Instead, Python uses a random number generator that produces a sequence of numbers that are completely determined by a seed value. Once you set the seed value, everything after that point may look random, but is actually totally reproducible. As long as you pick the same seed value, you get the same result!

Let’s use an example to investigate how randomness works in Python. Say we

have a series object containing the integers from 0 to 9. We want

to randomly pick 10 numbers from that list, but we want it to be reproducible.

Before randomly picking the 10 numbers,

we call the seed function from the numpy package, and pass it any integer as the argument.

Below we use the seed number 1. At

that point, Python will keep track of the randomness that occurs throughout the code.

For example, we can call the sample method

on the series of numbers, passing the argument n=10 to indicate that we want 10 samples.

The to_list method converts the resulting series into a basic Python list to make

the output easier to read.

import numpy as np

import pandas as pd

np.random.seed(1)

nums_0_to_9 = pd.Series([0, 1, 2, 3, 4, 5, 6, 7, 8, 9])

random_numbers1 = nums_0_to_9.sample(n=10).to_list()

random_numbers1

[2, 9, 6, 4, 0, 3, 1, 7, 8, 5]

You can see that random_numbers1 is a list of 10 numbers

from 0 to 9 that, from all appearances, looks random. If

we run the sample method again,

we will get a fresh batch of 10 numbers that also look random.

random_numbers2 = nums_0_to_9.sample(n=10).to_list()

random_numbers2

[9, 5, 3, 0, 8, 4, 2, 1, 6, 7]

If we want to force Python to produce the same sequences of random numbers,

we can simply call the np.random.seed function with the seed value 1—the same

as before—and then call the sample method again.

np.random.seed(1)

random_numbers1_again = nums_0_to_9.sample(n=10).to_list()

random_numbers1_again

[2, 9, 6, 4, 0, 3, 1, 7, 8, 5]

random_numbers2_again = nums_0_to_9.sample(n=10).to_list()

random_numbers2_again

[9, 5, 3, 0, 8, 4, 2, 1, 6, 7]

Notice that after calling np.random.seed, we get the same

two sequences of numbers in the same order. random_numbers1 and random_numbers1_again

produce the same sequence of numbers, and the same can be said about random_numbers2 and

random_numbers2_again. And if we choose a different value for the seed—say, 4235—we

obtain a different sequence of random numbers.

np.random.seed(4235)

random_numbers1_different = nums_0_to_9.sample(n=10).to_list()

random_numbers1_different

[6, 7, 2, 3, 5, 9, 1, 4, 0, 8]

random_numbers2_different = nums_0_to_9.sample(n=10).to_list()

random_numbers2_different

[6, 0, 1, 3, 2, 8, 4, 9, 5, 7]

In other words, even though the sequences of numbers that Python is generating look random, they are totally determined when we set a seed value!

So what does this mean for data analysis? Well, sample is certainly not the

only place where randomness is used in Python. Many of the functions

that we use in scikit-learn and beyond use randomness—some

of them without even telling you about it. Also note that when Python starts

up, it creates its own seed to use. So if you do not explicitly

call the np.random.seed function, your results

will likely not be reproducible. Finally, be careful to set the seed only once at

the beginning of a data analysis. Each time you set the seed, you are inserting

your own human input, thereby influencing the analysis. For example, if you use

the sample many times throughout your analysis but set the seed each time, the

randomness that Python uses will not look as random as it should.

In summary: if you want your analysis to be reproducible, i.e., produce the same result

each time you run it, make sure to use np.random.seed exactly once

at the beginning of the analysis. Different argument values

in np.random.seed will lead to different patterns of randomness, but as long as you pick the same

value your analysis results will be the same. In the remainder of the textbook,

we will set the seed once at the beginning of each chapter.

Note

When you use np.random.seed, you are really setting the seed for the numpy

package’s default random number generator. Using the global default random

number generator is easier than other methods, but has some potential drawbacks. For example,

other code that you may not notice (e.g., code buried inside some

other package) could potentially also call np.random.seed, thus modifying

your analysis in an undesirable way. Furthermore, not all functions use

numpy’s random number generator; some may use another one entirely.

In that case, setting np.random.seed may not actually make your whole analysis

reproducible.

In this book, we will generally only use packages that play nicely with numpy’s

default random number generator, so we will stick with np.random.seed.

You can achieve more careful control over randomness in your analysis

by creating a numpy Generator object

once at the beginning of your analysis, and passing it to

the random_state argument that is available in many pandas and scikit-learn

functions. Those functions will then use your Generator to generate random numbers instead of

numpy’s default generator. For example, we can reproduce our earlier example by using a Generator

object with the seed value set to 1; we get the same lists of numbers once again.

from numpy.random import Generator, PCG64

rng = Generator(PCG64(seed=1))

random_numbers1_third = nums_0_to_9.sample(n=10, random_state=rng).to_list()

random_numbers1_third

array([2, 9, 6, 4, 0, 3, 1, 7, 8, 5])

random_numbers2_third = nums_0_to_9.sample(n=10, random_state=rng).to_list()

random_numbers2_third

array([9, 5, 3, 0, 8, 4, 2, 1, 6, 7])

6.5. Evaluating performance with scikit-learn#

Back to evaluating classifiers now!

In Python, we can use the scikit-learn package not only to perform K-nearest neighbors

classification, but also to assess how well our classification worked.

Let’s work through an example of how to use tools from scikit-learn to evaluate a classifier

using the breast cancer data set from the previous chapter.

We begin the analysis by loading the packages we require,

reading in the breast cancer data,

and then making a quick scatter plot visualization of

tumor cell concavity versus smoothness colored by diagnosis in Fig. 6.3.

You will also notice that we set the random seed using the np.random.seed function,

as described in Section 6.4.

# load packages

import altair as alt

import pandas as pd

from sklearn import set_config

# Output dataframes instead of arrays

set_config(transform_output="pandas")

# set the seed

np.random.seed(1)

# load data

cancer = pd.read_csv("data/wdbc_unscaled.csv")

# re-label Class "M" as "Malignant", and Class "B" as "Benign"

cancer["Class"] = cancer["Class"].replace({

"M" : "Malignant",

"B" : "Benign"

})

# create scatter plot of tumor cell concavity versus smoothness,

# labeling the points be diagnosis class

perim_concav = alt.Chart(cancer).mark_circle().encode(

x=alt.X("Smoothness").scale(zero=False),

y="Concavity",

color=alt.Color("Class").title("Diagnosis")

)

perim_concav

Fig. 6.3 Scatter plot of tumor cell concavity versus smoothness colored by diagnosis label.#

6.5.1. Create the train / test split#

Once we have decided on a predictive question to answer and done some preliminary exploration, the very next thing to do is to split the data into the training and test sets. Typically, the training set is between 50% and 95% of the data, while the test set is the remaining 5% to 50%; the intuition is that you want to trade off between training an accurate model (by using a larger training data set) and getting an accurate evaluation of its performance (by using a larger test data set). Here, we will use 75% of the data for training, and 25% for testing.

The train_test_split function from scikit-learn handles the procedure of splitting

the data for us. We can specify two very important parameters when using train_test_split to ensure

that the accuracy estimates from the test data are reasonable. First,

setting shuffle=True (which is the default) means the data will be shuffled before splitting,

which ensures that any ordering present

in the data does not influence the data that ends up in the training and testing sets.

Second, by specifying the stratify parameter to be the response variable in the training set,

it stratifies the data by the class label, to ensure that roughly

the same proportion of each class ends up in both the training and testing sets. For example,

in our data set, roughly 63% of the

observations are from the benign class (Benign), and 37% are from the malignant class (Malignant),

so specifying stratify as the class column ensures that roughly 63% of the training data are benign,

37% of the training data are malignant,

and the same proportions exist in the testing data.

Let’s use the train_test_split function to create the training and testing sets.

We first need to import the function from the sklearn package. Then

we will specify that train_size=0.75 so that 75% of our original data set ends up

in the training set. We will also set the stratify argument to the categorical label variable

(here, cancer["Class"]) to ensure that the training and testing subsets contain the

right proportions of each category of observation.

from sklearn.model_selection import train_test_split

cancer_train, cancer_test = train_test_split(

cancer, train_size=0.75, stratify=cancer["Class"]

)

cancer_train.info()

<class 'pandas.core.frame.DataFrame'>

Index: 426 entries, 196 to 296

Data columns (total 12 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 ID 426 non-null int64

1 Class 426 non-null object

2 Radius 426 non-null float64

3 Texture 426 non-null float64

4 Perimeter 426 non-null float64

5 Area 426 non-null float64

6 Smoothness 426 non-null float64

7 Compactness 426 non-null float64

8 Concavity 426 non-null float64

9 Concave_Points 426 non-null float64

10 Symmetry 426 non-null float64

11 Fractal_Dimension 426 non-null float64

dtypes: float64(10), int64(1), object(1)

memory usage: 43.3+ KB

cancer_test.info()

<class 'pandas.core.frame.DataFrame'>

Index: 143 entries, 116 to 15

Data columns (total 12 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 ID 143 non-null int64

1 Class 143 non-null object

2 Radius 143 non-null float64

3 Texture 143 non-null float64

4 Perimeter 143 non-null float64

5 Area 143 non-null float64

6 Smoothness 143 non-null float64

7 Compactness 143 non-null float64

8 Concavity 143 non-null float64

9 Concave_Points 143 non-null float64

10 Symmetry 143 non-null float64

11 Fractal_Dimension 143 non-null float64

dtypes: float64(10), int64(1), object(1)

memory usage: 14.5+ KB

We can see from the info method above that the training set contains 426 observations,

while the test set contains 143 observations. This corresponds to

a train / test split of 75% / 25%, as desired. Recall from Chapter 5

that we use the info method to preview the number of rows, the variable names, their data types, and

missing entries of a data frame.

We can use the value_counts method with the normalize argument set to True

to find the percentage of malignant and benign classes

in cancer_train. We see about 63% of the training

data are benign and 37%

are malignant, indicating that our class proportions were roughly preserved when we split the data.

cancer_train["Class"].value_counts(normalize=True)

Class

Benign 0.626761

Malignant 0.373239

Name: proportion, dtype: float64

6.5.2. Preprocess the data#

As we mentioned in the last chapter, K-nearest neighbors is sensitive to the scale of the predictors, so we should perform some preprocessing to standardize them. An additional consideration we need to take when doing this is that we should create the standardization preprocessor using only the training data. This ensures that our test data does not influence any aspect of our model training. Once we have created the standardization preprocessor, we can then apply it separately to both the training and test data sets.

Fortunately, scikit-learn helps us handle this properly as long as we wrap our

analysis steps in a Pipeline, as in Chapter 5.

So below we construct and prepare

the preprocessor using make_column_transformer just as before.

from sklearn.preprocessing import StandardScaler

from sklearn.compose import make_column_transformer

cancer_preprocessor = make_column_transformer(

(StandardScaler(), ["Smoothness", "Concavity"]),

)

6.5.3. Train the classifier#

Now that we have split our original data set into training and test sets, we

can create our K-nearest neighbors classifier with only the training set using

the technique we learned in the previous chapter. For now, we will just choose

the number \(K\) of neighbors to be 3, and use only the concavity and smoothness predictors by

selecting them from the cancer_train data frame.

We will first import the KNeighborsClassifier model and make_pipeline from sklearn.

Then as before we will create a model object, combine

the model object and preprocessor into a Pipeline using the make_pipeline function, and then finally

use the fit method to build the classifier.

from sklearn.neighbors import KNeighborsClassifier

from sklearn.pipeline import make_pipeline

knn = KNeighborsClassifier(n_neighbors=3)

X = cancer_train[["Smoothness", "Concavity"]]

y = cancer_train["Class"]

knn_pipeline = make_pipeline(cancer_preprocessor, knn)

knn_pipeline.fit(X, y)

knn_pipeline

Pipeline(steps=[('columntransformer',

ColumnTransformer(transformers=[('standardscaler',

StandardScaler(),

['Smoothness',

'Concavity'])])),

('kneighborsclassifier', KNeighborsClassifier(n_neighbors=3))])In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook. On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

Pipeline(steps=[('columntransformer',

ColumnTransformer(transformers=[('standardscaler',

StandardScaler(),

['Smoothness',

'Concavity'])])),

('kneighborsclassifier', KNeighborsClassifier(n_neighbors=3))])ColumnTransformer(transformers=[('standardscaler', StandardScaler(),

['Smoothness', 'Concavity'])])['Smoothness', 'Concavity']

StandardScaler()

KNeighborsClassifier(n_neighbors=3)

6.5.4. Predict the labels in the test set#

Now that we have a K-nearest neighbors classifier object, we can use it to

predict the class labels for our test set and

augment the original test data with a column of predictions.

The Class variable contains the actual

diagnoses, while the predicted contains the predicted diagnoses from the

classifier. Note that below we print out just the ID, Class, and predicted

variables in the output data frame.

cancer_test["predicted"] = knn_pipeline.predict(cancer_test[["Smoothness", "Concavity"]])

cancer_test[["ID", "Class", "predicted"]]

| ID | Class | predicted | |

|---|---|---|---|

| 116 | 864726 | Benign | Malignant |

| 146 | 869691 | Malignant | Malignant |

| 86 | 86135501 | Malignant | Malignant |

| 12 | 846226 | Malignant | Malignant |

| 105 | 863030 | Malignant | Malignant |

| ... | ... | ... | ... |

| 244 | 884180 | Malignant | Malignant |

| 23 | 851509 | Malignant | Malignant |

| 125 | 86561 | Benign | Benign |

| 281 | 8912055 | Benign | Benign |

| 15 | 84799002 | Malignant | Malignant |

143 rows × 3 columns

6.5.5. Evaluate performance#

Finally, we can assess our classifier’s performance. First, we will examine accuracy.

To do this we will use the score method, specifying two arguments:

predictors and the actual labels. We pass the same test data

for the predictors that we originally passed into predict when making predictions,

and we provide the actual labels via the cancer_test["Class"] series.

knn_pipeline.score(

cancer_test[["Smoothness", "Concavity"]],

cancer_test["Class"]

)

0.8951048951048951

The output shows that the estimated accuracy of the classifier on the test data

was 90%. To compute the precision and recall, we can use the

precision_score and recall_score functions from scikit-learn. We specify

the true labels from the Class variable as the y_true argument, the predicted

labels from the predicted variable as the y_pred argument,

and which label should be considered to be positive via the pos_label argument.

from sklearn.metrics import recall_score, precision_score

precision_score(

y_true=cancer_test["Class"],

y_pred=cancer_test["predicted"],

pos_label="Malignant"

)

0.8275862068965517

recall_score(

y_true=cancer_test["Class"],

y_pred=cancer_test["predicted"],

pos_label="Malignant"

)

0.9056603773584906

The output shows that the estimated precision and recall of the classifier on the test

data was 83% and 91%, respectively.

Finally, we can look at the confusion matrix for the classifier

using the crosstab function from pandas. The crosstab function takes two

arguments: the actual labels first, then the predicted labels second. Note that

crosstab orders its columns alphabetically, but the positive label is still Malignant,

even if it is not in the top left corner as in the example confusion matrix earlier in this chapter.

pd.crosstab(

cancer_test["Class"],

cancer_test["predicted"]

)

| predicted | Benign | Malignant |

|---|---|---|

| Class | ||

| Benign | 80 | 10 |

| Malignant | 5 | 48 |

The confusion matrix shows 48 observations were correctly predicted as malignant, and 80 were correctly predicted as benign. It also shows that the classifier made some mistakes; in particular, it classified 5 observations as benign when they were actually malignant, and 10 observations as malignant when they were actually benign. Using our formulas from earlier, we see that the accuracy, precision, and recall agree with what Python reported.

6.5.6. Critically analyze performance#

We now know that the classifier was 90% accurate on the test data set, and had a precision of 83% and a recall of 91%. That sounds pretty good! Wait, is it good? Or do we need something higher?

In general, a good value for accuracy (as well as precision and recall, if applicable) depends on the application; you must critically analyze your accuracy in the context of the problem you are solving. For example, if we were building a classifier for a kind of tumor that is benign 99% of the time, a classifier with 99% accuracy is not terribly impressive (just always guess benign!). And beyond just accuracy, we need to consider the precision and recall: as mentioned earlier, the kind of mistake the classifier makes is important in many applications as well. In the previous example with 99% benign observations, it might be very bad for the classifier to predict “benign” when the actual class is “malignant” (a false negative), as this might result in a patient not receiving appropriate medical attention. In other words, in this context, we need the classifier to have a high recall. On the other hand, it might be less bad for the classifier to guess “malignant” when the actual class is “benign” (a false positive), as the patient will then likely see a doctor who can provide an expert diagnosis. In other words, we are fine with sacrificing some precision in the interest of achieving high recall. This is why it is important not only to look at accuracy, but also the confusion matrix.

However, there is always an easy baseline that you can compare to for any classification problem: the majority classifier. The majority classifier always guesses the majority class label from the training data, regardless of the predictor variables’ values. It helps to give you a sense of scale when considering accuracies. If the majority classifier obtains a 90% accuracy on a problem, then you might hope for your K-nearest neighbors classifier to do better than that. If your classifier provides a significant improvement upon the majority classifier, this means that at least your method is extracting some useful information from your predictor variables. Be careful though: improving on the majority classifier does not necessarily mean the classifier is working well enough for your application.

As an example, in the breast cancer data, recall the proportions of benign and malignant observations in the training data are as follows:

cancer_train["Class"].value_counts(normalize=True)

Class

Benign 0.626761

Malignant 0.373239

Name: proportion, dtype: float64

Since the benign class represents the majority of the training data, the majority classifier would always predict that a new observation is benign. The estimated accuracy of the majority classifier is usually fairly close to the majority class proportion in the training data. In this case, we would suspect that the majority classifier will have an accuracy of around 63%. The K-nearest neighbors classifier we built does quite a bit better than this, with an accuracy of 90%. This means that from the perspective of accuracy, the K-nearest neighbors classifier improved quite a bit on the basic majority classifier. Hooray! But we still need to be cautious; in this application, it is likely very important not to misdiagnose any malignant tumors to avoid missing patients who actually need medical care. The confusion matrix above shows that the classifier does, indeed, misdiagnose a significant number of malignant tumors as benign (5 out of 53 malignant tumors, or 9%!). Therefore, even though the accuracy improved upon the majority classifier, our critical analysis suggests that this classifier may not have appropriate performance for the application.

6.6. Tuning the classifier#

The vast majority of predictive models in statistics and machine learning have parameters. A parameter is a number you have to pick in advance that determines some aspect of how the model behaves. For example, in the K-nearest neighbors classification algorithm, \(K\) is a parameter that we have to pick that determines how many neighbors participate in the class vote. By picking different values of \(K\), we create different classifiers that make different predictions.

So then, how do we pick the best value of \(K\), i.e., tune the model? And is it possible to make this selection in a principled way? In this book, we will focus on maximizing the accuracy of the classifier. Ideally, we want somehow to maximize the accuracy of our classifier on data it hasn’t seen yet. But we cannot use our test data set in the process of building our model. So we will play the same trick we did before when evaluating our classifier: we’ll split our training data itself into two subsets, use one to train the model, and then use the other to evaluate it. In this section, we will cover the details of this procedure, as well as how to use it to help you pick a good parameter value for your classifier.

And remember: don’t touch the test set during the tuning process. Tuning is a part of model training!

6.6.1. Cross-validation#

The first step in choosing the parameter \(K\) is to be able to evaluate the classifier using only the training data. If this is possible, then we can compare the classifier’s performance for different values of \(K\)—and pick the best—using only the training data. As suggested at the beginning of this section, we will accomplish this by splitting the training data, training on one subset, and evaluating on the other. The subset of training data used for evaluation is often called the validation set.

There is, however, one key difference from the train/test split that we performed earlier. In particular, we were forced to make only a single split of the data. This is because at the end of the day, we have to produce a single classifier. If we had multiple different splits of the data into training and testing data, we would produce multiple different classifiers. But while we are tuning the classifier, we are free to create multiple classifiers based on multiple splits of the training data, evaluate them, and then choose a parameter value based on all of the different results. If we just split our overall training data once, our best parameter choice will depend strongly on whatever data was lucky enough to end up in the validation set. Perhaps using multiple different train/validation splits, we’ll get a better estimate of accuracy, which will lead to a better choice of the number of neighbors \(K\) for the overall set of training data.

Let’s investigate this idea in Python! In particular, we will generate five different train/validation splits of our overall training data, train five different K-nearest neighbors models, and evaluate their accuracy. We will start with just a single split.

# create the 25/75 split of the *training data* into sub-training and validation

cancer_subtrain, cancer_validation = train_test_split(

cancer_train, train_size=0.75, stratify=cancer_train["Class"]

)

# fit the model on the sub-training data

knn = KNeighborsClassifier(n_neighbors=3)

X = cancer_subtrain[["Smoothness", "Concavity"]]

y = cancer_subtrain["Class"]

knn_pipeline = make_pipeline(cancer_preprocessor, knn)

knn_pipeline.fit(X, y)

# compute the score on validation data

acc = knn_pipeline.score(

cancer_validation[["Smoothness", "Concavity"]],

cancer_validation["Class"]

)

acc

0.897196261682243

The accuracy estimate using this split is 89.7%. Now we repeat the above code 4 more times, which generates 4 more splits. Therefore we get five different shuffles of the data, and therefore five different values for accuracy: [89.7%, 88.8%, 87.9%, 86.0%, 87.9%]. None of these values are necessarily “more correct” than any other; they’re just five estimates of the true, underlying accuracy of our classifier built using our overall training data. We can combine the estimates by taking their average (here 88.0%) to try to get a single assessment of our classifier’s accuracy; this has the effect of reducing the influence of any one (un)lucky validation set on the estimate.

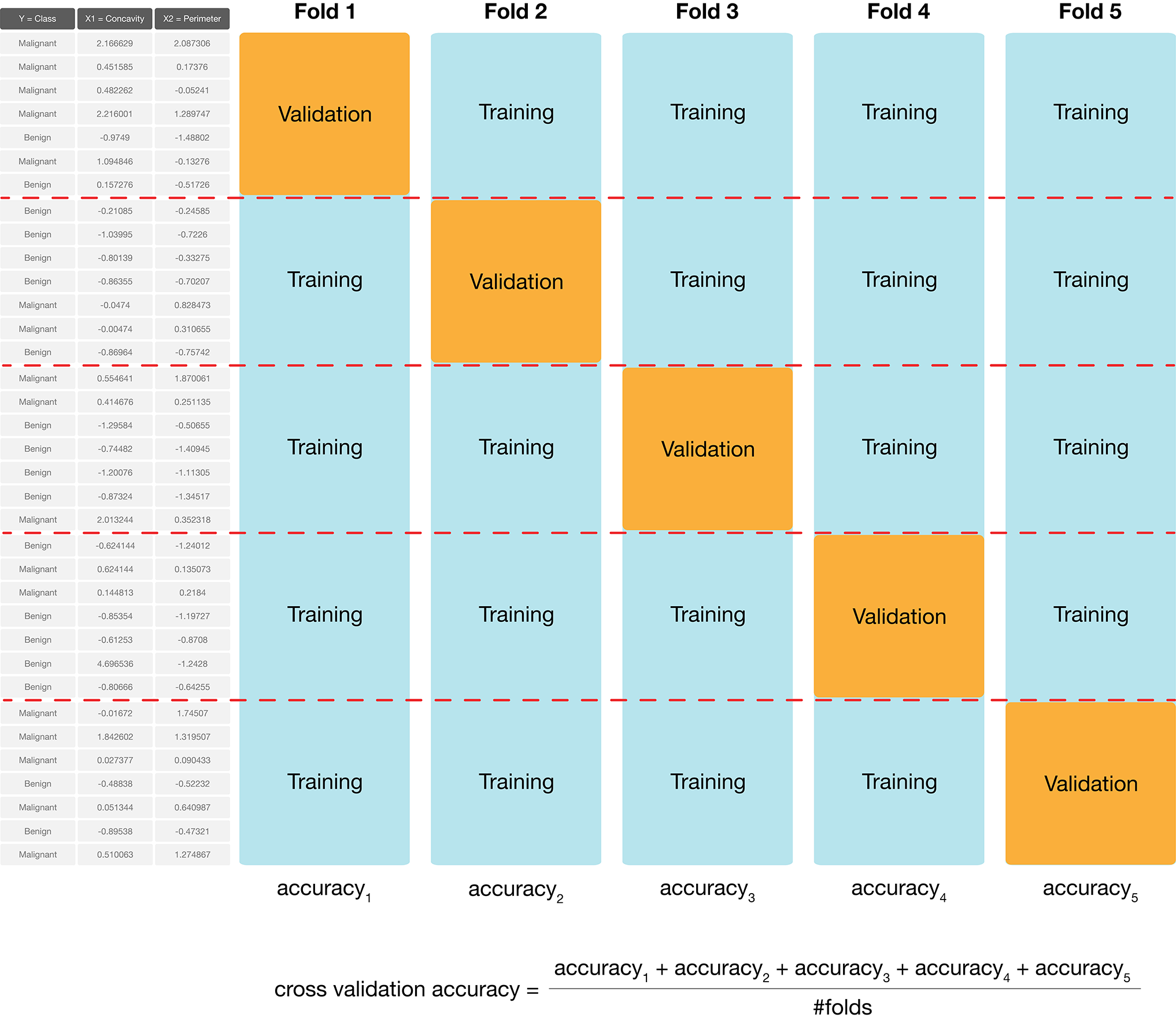

In practice, we don’t use random splits, but rather use a more structured splitting procedure so that each observation in the data set is used in a validation set only a single time. The name for this strategy is cross-validation. In cross-validation, we split our overall training data into \(C\) evenly sized chunks. Then, iteratively use \(1\) chunk as the validation set and combine the remaining \(C-1\) chunks as the training set. This procedure is shown in Fig. 6.4. Here, \(C=5\) different chunks of the data set are used, resulting in 5 different choices for the validation set; we call this 5-fold cross-validation.

Fig. 6.4 5-fold cross-validation.#

To perform 5-fold cross-validation in Python with scikit-learn, we use another

function: cross_validate. This function requires that we specify

a modelling Pipeline as the estimator argument,

the number of folds as the cv argument,

and the training data predictors and labels as the X and y arguments.

Since the cross_validate function outputs a dictionary, we use pd.DataFrame to convert it to a pandas

dataframe for better visualization.

Note that the cross_validate function handles stratifying the classes in

each train and validate fold automatically.

from sklearn.model_selection import cross_validate

knn = KNeighborsClassifier(n_neighbors=3)

cancer_pipe = make_pipeline(cancer_preprocessor, knn)

X = cancer_train[["Smoothness", "Concavity"]]

y = cancer_train["Class"]

cv_5_df = pd.DataFrame(

cross_validate(

estimator=cancer_pipe,

cv=5,

X=X,

y=y

)

)

cv_5_df

| fit_time | score_time | test_score | |

|---|---|---|---|

| 0 | 0.004383 | 0.005850 | 0.837209 |

| 1 | 0.003752 | 0.005568 | 0.870588 |

| 2 | 0.003676 | 0.005506 | 0.894118 |

| 3 | 0.003770 | 0.005581 | 0.870588 |

| 4 | 0.003580 | 0.005487 | 0.882353 |

The validation scores we are interested in are contained in the test_score column.

We can then aggregate the mean and standard error

of the classifier’s validation accuracy across the folds.

You should consider the mean (mean) to be the estimated accuracy, while the standard

error (sem) is a measure of how uncertain we are in that mean value. A detailed treatment of this

is beyond the scope of this chapter; but roughly, if your estimated mean is 0.87 and standard

error is 0.01, you can expect the true average accuracy of the

classifier to be somewhere roughly between 86% and 88% (although it may

fall outside this range). You may ignore the other columns in the metrics data frame.

cv_5_metrics = cv_5_df.agg(["mean", "sem"])

cv_5_metrics

| fit_time | score_time | test_score | |

|---|---|---|---|

| mean | 0.003832 | 0.005598 | 0.870971 |

| sem | 0.000142 | 0.000065 | 0.009501 |

We can choose any number of folds, and typically the more we use the better our accuracy estimate will be (lower standard error). However, we are limited by computational power: the more folds we choose, the more computation it takes, and hence the more time it takes to run the analysis. So when you do cross-validation, you need to consider the size of the data, the speed of the algorithm (e.g., K-nearest neighbors), and the speed of your computer. In practice, this is a trial-and-error process, but typically \(C\) is chosen to be either 5 or 10. Here we will try 10-fold cross-validation to see if we get a lower standard error.

cv_10 = pd.DataFrame(

cross_validate(

estimator=cancer_pipe,

cv=10,

X=X,

y=y

)

)

cv_10_df = pd.DataFrame(cv_10)

cv_10_metrics = cv_10_df.agg(["mean", "sem"])

cv_10_metrics

| fit_time | score_time | test_score | |

|---|---|---|---|

| mean | 0.003641 | 0.004264 | 0.884939 |

| sem | 0.000035 | 0.000033 | 0.006718 |

In this case, using 10-fold instead of 5-fold cross validation did reduce the standard error very slightly. In fact, due to the randomness in how the data are split, sometimes you might even end up with a higher standard error when increasing the number of folds! We can make the reduction in standard error more dramatic by increasing the number of folds by a large amount. In the following code we show the result when \(C = 50\); picking such a large number of folds can take a long time to run in practice, so we usually stick to 5 or 10.

cv_50_df = pd.DataFrame(

cross_validate(

estimator=cancer_pipe,

cv=50,

X=X,

y=y

)

)

cv_50_metrics = cv_50_df.agg(["mean", "sem"])

cv_50_metrics

| fit_time | score_time | test_score | |

|---|---|---|---|

| mean | 0.003591 | 0.003130 | 0.888056 |

| sem | 0.000013 | 0.000009 | 0.003005 |

6.6.2. Parameter value selection#

Using 5- and 10-fold cross-validation, we have estimated that the prediction accuracy of our classifier is somewhere around 88%. Whether that is good or not depends entirely on the downstream application of the data analysis. In the present situation, we are trying to predict a tumor diagnosis, with expensive, damaging chemo/radiation therapy or patient death as potential consequences of misprediction. Hence, we might like to do better than 88% for this application.

In order to improve our classifier, we have one choice of parameter: the number of

neighbors, \(K\). Since cross-validation helps us evaluate the accuracy of our

classifier, we can use cross-validation to calculate an accuracy for each value

of \(K\) in a reasonable range, and then pick the value of \(K\) that gives us the

best accuracy. The scikit-learn package collection provides built-in

functionality, named GridSearchCV, to automatically handle the details for us.

Before we use GridSearchCV, we need to create a new pipeline

with a KNeighborsClassifier that has the number of neighbors left unspecified.

knn = KNeighborsClassifier()

cancer_tune_pipe = make_pipeline(cancer_preprocessor, knn)

Next we specify the grid of parameter values that we want to try for

each tunable parameter. We do this in a Python dictionary: the key is

the identifier of the parameter to tune, and the value is a list of parameter values

to try when tuning. We can find the “identifier” of a parameter by using

the get_params method on the pipeline.

cancer_tune_pipe.get_params()

{'memory': None,

'steps': [('columntransformer',

ColumnTransformer(transformers=[('standardscaler', StandardScaler(),

['Smoothness', 'Concavity'])])),

('kneighborsclassifier', KNeighborsClassifier())],

'verbose': False,

'columntransformer': ColumnTransformer(transformers=[('standardscaler', StandardScaler(),

['Smoothness', 'Concavity'])]),

'kneighborsclassifier': KNeighborsClassifier(),

'columntransformer__n_jobs': None,

'columntransformer__remainder': 'drop',

'columntransformer__sparse_threshold': 0.3,

'columntransformer__transformer_weights': None,

'columntransformer__transformers': [('standardscaler',

StandardScaler(),

['Smoothness', 'Concavity'])],

'columntransformer__verbose': False,

'columntransformer__verbose_feature_names_out': True,

'columntransformer__standardscaler': StandardScaler(),

'columntransformer__standardscaler__copy': True,

'columntransformer__standardscaler__with_mean': True,

'columntransformer__standardscaler__with_std': True,

'kneighborsclassifier__algorithm': 'auto',

'kneighborsclassifier__leaf_size': 30,

'kneighborsclassifier__metric': 'minkowski',

'kneighborsclassifier__metric_params': None,

'kneighborsclassifier__n_jobs': None,

'kneighborsclassifier__n_neighbors': 5,

'kneighborsclassifier__p': 2,

'kneighborsclassifier__weights': 'uniform'}

Wow, there’s quite a bit of stuff there! If you sift through the muck

a little bit, you will see one parameter identifier that stands out:

"kneighborsclassifier__n_neighbors". This identifier combines the name

of the K nearest neighbors classification step in our pipeline, kneighborsclassifier,

with the name of the parameter, n_neighbors.

We now construct the parameter_grid dictionary that will tell GridSearchCV

what parameter values to try.

Note that you can specify multiple tunable parameters

by creating a dictionary with multiple key-value pairs, but

here we just have to tune the number of neighbors.

parameter_grid = {

"kneighborsclassifier__n_neighbors": range(1, 100, 5),

}

The range function in Python that we used above allows us to specify a sequence of values.

The first argument is the starting number (here, 1),

the second argument is one greater than the final number (here, 100),

and the third argument is the number to values to skip between steps in the sequence (here, 5).

So in this case we generate the sequence 1, 6, 11, 16, …, 96.

If we instead specified range(0, 100, 5), we would get the sequence 0, 5, 10, 15, …, 90, 95.

The number 100 is not included because the third argument is one greater than the final possible

number in the sequence. There are two additional useful ways to employ range.

If we call range with just one argument, Python counts

up to that number starting at 0. So range(4) is the same as range(0, 4, 1) and generates the sequence 0, 1, 2, 3.

If we call range with two arguments, Python counts starting at the first number up to the second number.

So range(1, 4) is the same as range(1, 4, 1) and generates the sequence 1, 2, 3.

Okay! We are finally ready to create the GridSearchCV object.

First we import it from the sklearn package.

Then we pass it the cancer_tune_pipe pipeline in the estimator argument,

the parameter_grid in the param_grid argument,

and specify cv=10 folds. Note that this does not actually run

the tuning yet; just as before, we will have to use the fit method.

from sklearn.model_selection import GridSearchCV

cancer_tune_grid = GridSearchCV(

estimator=cancer_tune_pipe,

param_grid=parameter_grid,

cv=10

)

Now we use the fit method on the GridSearchCV object to begin the tuning process.

We pass the training data predictors and labels as the two arguments to fit as usual.

The cv_results_ attribute of the output contains the resulting cross-validation

accuracy estimate for each choice of n_neighbors, but it isn’t in an easily used

format. We will wrap it in a pd.DataFrame to make it easier to understand,

and print the info of the result.

cancer_tune_grid.fit(

cancer_train[["Smoothness", "Concavity"]],

cancer_train["Class"]

)

accuracies_grid = pd.DataFrame(cancer_tune_grid.cv_results_)

accuracies_grid.info()

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 20 entries, 0 to 19

Data columns (total 19 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 mean_fit_time 20 non-null float64

1 std_fit_time 20 non-null float64

2 mean_score_time 20 non-null float64

3 std_score_time 20 non-null float64

4 param_kneighborsclassifier__n_neighbors 20 non-null object

5 params 20 non-null object

6 split0_test_score 20 non-null float64

7 split1_test_score 20 non-null float64

8 split2_test_score 20 non-null float64

9 split3_test_score 20 non-null float64

10 split4_test_score 20 non-null float64

11 split5_test_score 20 non-null float64

12 split6_test_score 20 non-null float64

13 split7_test_score 20 non-null float64

14 split8_test_score 20 non-null float64

15 split9_test_score 20 non-null float64

16 mean_test_score 20 non-null float64

17 std_test_score 20 non-null float64

18 rank_test_score 20 non-null int32

dtypes: float64(16), int32(1), object(2)

memory usage: 3.0+ KB

There is a lot of information to look at here, but we are most interested

in three quantities: the number of neighbors (param_kneighbors_classifier__n_neighbors),

the cross-validation accuracy estimate (mean_test_score),

and the standard error of the accuracy estimate. Unfortunately GridSearchCV does

not directly output the standard error for each cross-validation accuracy; but

it does output the standard deviation (std_test_score). We can compute

the standard error from the standard deviation by dividing it by the square

root of the number of folds, i.e.,

We will also rename the parameter name column to be a bit more readable,

and drop the now unused std_test_score column.

accuracies_grid["sem_test_score"] = accuracies_grid["std_test_score"] / 10**(1/2)

accuracies_grid = (

accuracies_grid[[

"param_kneighborsclassifier__n_neighbors",

"mean_test_score",

"sem_test_score"

]]

.rename(columns={"param_kneighborsclassifier__n_neighbors": "n_neighbors"})

)

accuracies_grid

| n_neighbors | mean_test_score | sem_test_score | |

|---|---|---|---|

| 0 | 1 | 0.845127 | 0.019966 |

| 1 | 6 | 0.873200 | 0.015680 |

| 2 | 11 | 0.861517 | 0.019547 |

| 3 | 16 | 0.861573 | 0.017787 |

| 4 | 21 | 0.866279 | 0.017889 |

| 5 | 26 | 0.875637 | 0.016026 |

| 6 | 31 | 0.885050 | 0.015406 |

| 7 | 36 | 0.887375 | 0.013694 |

| 8 | 41 | 0.887375 | 0.013694 |

| 9 | 46 | 0.887320 | 0.013314 |

| 10 | 51 | 0.882669 | 0.014523 |

| 11 | 56 | 0.878018 | 0.014414 |

| 12 | 61 | 0.880343 | 0.014299 |

| 13 | 66 | 0.873200 | 0.015416 |

| 14 | 71 | 0.877962 | 0.013660 |

| 15 | 76 | 0.873200 | 0.014698 |

| 16 | 81 | 0.873200 | 0.014698 |

| 17 | 86 | 0.880288 | 0.011277 |

| 18 | 91 | 0.875581 | 0.012967 |

| 19 | 96 | 0.875581 | 0.008193 |

We can decide which number of neighbors is best by plotting the accuracy versus \(K\),

as shown in Fig. 6.5.

Here we are using the shortcut point=True to layer a point and line chart.

accuracy_vs_k = alt.Chart(accuracies_grid).mark_line(point=True).encode(

x=alt.X("n_neighbors").title("Neighbors"),

y=alt.Y("mean_test_score")

.scale(zero=False)

.title("Accuracy estimate")

)

accuracy_vs_k

Fig. 6.5 Plot of estimated accuracy versus the number of neighbors.#

We can also obtain the number of neighbours with the highest accuracy programmatically by accessing

the best_params_ attribute of the fit GridSearchCV object. Note that it is still useful to visualize

the results as we did above since this provides additional information on how the model performance varies.

cancer_tune_grid.best_params_

{'kneighborsclassifier__n_neighbors': 36}

Setting the number of neighbors to \(K =\) 36 provides the highest cross-validation accuracy estimate (88.7%). But there is no exact or perfect answer here; any selection from \(K = 30\) to \(80\) or so would be reasonably justified, as all of these differ in classifier accuracy by a small amount. Remember: the values you see on this plot are estimates of the true accuracy of our classifier. Although the \(K =\) 36 value is higher than the others on this plot, that doesn’t mean the classifier is actually more accurate with this parameter value! Generally, when selecting \(K\) (and other parameters for other predictive models), we are looking for a value where:

we get roughly optimal accuracy, so that our model will likely be accurate;

changing the value to a nearby one (e.g., adding or subtracting a small number) doesn’t decrease accuracy too much, so that our choice is reliable in the presence of uncertainty;

the cost of training the model is not prohibitive (e.g., in our situation, if \(K\) is too large, predicting becomes expensive!).

We know that \(K =\) 36 provides the highest estimated accuracy. Further, Fig. 6.5 shows that the estimated accuracy changes by only a small amount if we increase or decrease \(K\) near \(K =\) 36. And finally, \(K =\) 36 does not create a prohibitively expensive computational cost of training. Considering these three points, we would indeed select \(K =\) 36 for the classifier.

6.6.3. Under/Overfitting#

To build a bit more intuition, what happens if we keep increasing the number of

neighbors \(K\)? In fact, the cross-validation accuracy estimate actually starts to decrease!

Let’s specify a much larger range of values of \(K\) to try in the param_grid

argument of GridSearchCV. Fig. 6.6 shows a plot of estimated accuracy as

we vary \(K\) from 1 to almost the number of observations in the data set.

large_param_grid = {

"kneighborsclassifier__n_neighbors": range(1, 385, 10),

}

large_cancer_tune_grid = GridSearchCV(

estimator=cancer_tune_pipe,

param_grid=large_param_grid,

cv=10

)

large_cancer_tune_grid.fit(

cancer_train[["Smoothness", "Concavity"]],

cancer_train["Class"]

)

large_accuracies_grid = pd.DataFrame(large_cancer_tune_grid.cv_results_)

large_accuracy_vs_k = alt.Chart(large_accuracies_grid).mark_line(point=True).encode(

x=alt.X("param_kneighborsclassifier__n_neighbors").title("Neighbors"),

y=alt.Y("mean_test_score")

.scale(zero=False)

.title("Accuracy estimate")

)

large_accuracy_vs_k

Fig. 6.6 Plot of accuracy estimate versus number of neighbors for many K values.#

Underfitting: What is actually happening to our classifier that causes this? As we increase the number of neighbors, more and more of the training observations (and those that are farther and farther away from the point) get a “say” in what the class of a new observation is. This causes a sort of “averaging effect” to take place, making the boundary between where our classifier would predict a tumor to be malignant versus benign to smooth out and become simpler. If you take this to the extreme, setting \(K\) to the total training data set size, then the classifier will always predict the same label regardless of what the new observation looks like. In general, if the model isn’t influenced enough by the training data, it is said to underfit the data.

Overfitting: In contrast, when we decrease the number of neighbors, each individual data point has a stronger and stronger vote regarding nearby points. Since the data themselves are noisy, this causes a more “jagged” boundary corresponding to a less simple model. If you take this case to the extreme, setting \(K = 1\), then the classifier is essentially just matching each new observation to its closest neighbor in the training data set. This is just as problematic as the large \(K\) case, because the classifier becomes unreliable on new data: if we had a different training set, the predictions would be completely different. In general, if the model is influenced too much by the training data, it is said to overfit the data.

Fig. 6.7 Effect of K in overfitting and underfitting.#

Both overfitting and underfitting are problematic and will lead to a model that does not generalize well to new data. When fitting a model, we need to strike a balance between the two. You can see these two effects in Fig. 6.7, which shows how the classifier changes as we set the number of neighbors \(K\) to 1, 7, 20, and 300.

6.6.4. Evaluating on the test set#

Now that we have tuned the K-NN classifier and set \(K =\) 36,

we are done building the model and it is time to evaluate the quality of its predictions on the held out

test data, as we did earlier in Section 6.5.5.

We first need to retrain the K-NN classifier

on the entire training data set using the selected number of neighbors.

Fortunately we do not have to do this ourselves manually; scikit-learn does it for

us automatically. To make predictions and assess the estimated accuracy of the best model on the test data, we can use the

score and predict methods of the fit GridSearchCV object. We can then pass those predictions to

the precision, recall, and crosstab functions to assess the estimated precision and recall, and print a confusion matrix.

cancer_test["predicted"] = cancer_tune_grid.predict(

cancer_test[["Smoothness", "Concavity"]]

)

cancer_tune_grid.score(

cancer_test[["Smoothness", "Concavity"]],

cancer_test["Class"]

)

0.9090909090909091

precision_score(

y_true=cancer_test["Class"],

y_pred=cancer_test["predicted"],

pos_label='Malignant'

)

0.8846153846153846

recall_score(

y_true=cancer_test["Class"],

y_pred=cancer_test["predicted"],

pos_label='Malignant'

)

0.8679245283018868

pd.crosstab(

cancer_test["Class"],

cancer_test["predicted"]

)

| predicted | Benign | Malignant |

|---|---|---|

| Class | ||

| Benign | 84 | 6 |

| Malignant | 7 | 46 |

At first glance, this is a bit surprising: the accuracy of the classifier has not changed much despite tuning the number of neighbors! Our first model with \(K =\) 3 (before we knew how to tune) had an estimated accuracy of 90%, while the tuned model with \(K =\) 36 had an estimated accuracy of 91%. Upon examining Fig. 6.5 again to see the cross validation accuracy estimates for a range of neighbors, this result becomes much less surprising. From 1 to around 96 neighbors, the cross validation accuracy estimate varies only by around 3%, with each estimate having a standard error around 1%. Since the cross-validation accuracy estimates the test set accuracy, the fact that the test set accuracy also doesn’t change much is expected. Also note that the \(K =\) 3 model had a precision of 83% and recall of 91%, while the tuned model had a precision of 88% and recall of 87%. Given that the recall decreased—remember, in this application, recall is critical to making sure we find all the patients with malignant tumors—the tuned model may actually be less preferred in this setting. In any case, it is important to think critically about the result of tuning. Models tuned to maximize accuracy are not necessarily better for a given application.

6.7. Summary#

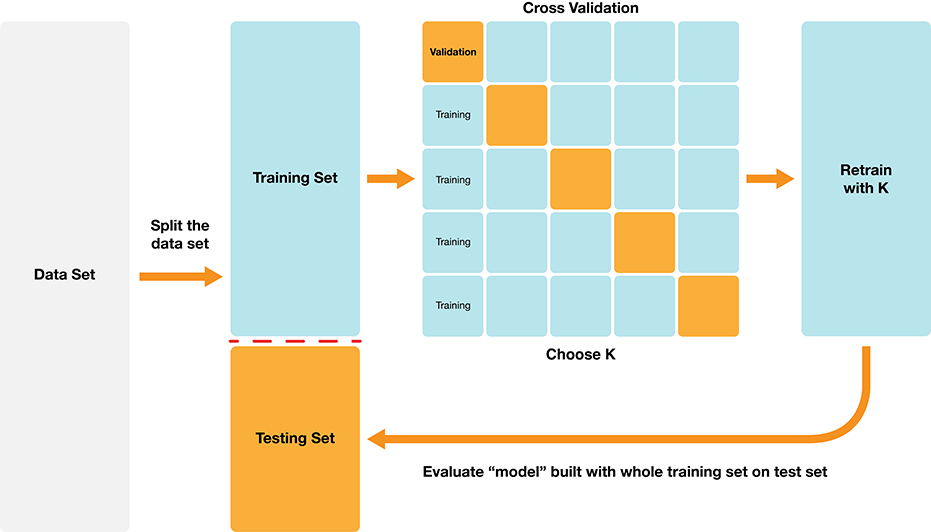

Classification algorithms use one or more quantitative variables to predict the value of another categorical variable. In particular, the K-nearest neighbors algorithm does this by first finding the \(K\) points in the training data nearest to the new observation, and then returning the majority class vote from those training observations. We can tune and evaluate a classifier by splitting the data randomly into a training and test data set. The training set is used to build the classifier, and we can tune the classifier (e.g., select the number of neighbors in K-nearest neighbors) by maximizing estimated accuracy via cross-validation. After we have tuned the model, we can use the test set to estimate its accuracy. The overall process is summarized in Fig. 6.8.

Fig. 6.8 Overview of K-NN classification.#

The overall workflow for performing K-nearest neighbors classification using scikit-learn is as follows:

Use the

train_test_splitfunction to split the data into a training and test set. Set thestratifyargument to the class label column of the dataframe. Put the test set aside for now.Create a

Pipelinethat specifies the preprocessing steps and the classifier.Define the parameter grid by passing the set of \(K\) values that you would like to tune.

Use

GridSearchCVto estimate the classifier accuracy for a range of \(K\) values. Pass the pipeline and parameter grid defined in steps 2. and 3. as theparam_gridargument and theestimatorargument, respectively.Execute the grid search by passing the training data to the

fitmethod on theGridSearchCVinstance created in step 4.Pick a value of \(K\) that yields a high cross-validation accuracy estimate that doesn’t change much if you change \(K\) to a nearby value.

Create a new model object for the best parameter value (i.e., \(K\)), and retrain the classifier by calling the

fitmethod.Evaluate the estimated accuracy of the classifier on the test set using the

scoremethod.

In these last two chapters, we focused on the K-nearest neighbors algorithm, but there are many other methods we could have used to predict a categorical label. All algorithms have their strengths and weaknesses, and we summarize these for the K-NN here.

Strengths: K-nearest neighbors classification

is a simple, intuitive algorithm,

requires few assumptions about what the data must look like, and

works for binary (two-class) and multi-class (more than 2 classes) classification problems.

Weaknesses: K-nearest neighbors classification

becomes very slow as the training data gets larger,

may not perform well with a large number of predictors, and

may not perform well when classes are imbalanced.

6.8. Predictor variable selection#

Note

This section is not required reading for the remainder of the textbook. It is included for those readers interested in learning how irrelevant variables can influence the performance of a classifier, and how to pick a subset of useful variables to include as predictors.

Another potentially important part of tuning your classifier is to choose which variables from your data will be treated as predictor variables. Technically, you can choose anything from using a single predictor variable to using every variable in your data; the K-nearest neighbors algorithm accepts any number of predictors. However, it is not the case that using more predictors always yields better predictions! In fact, sometimes including irrelevant predictors can actually negatively affect classifier performance.

6.8.1. The effect of irrelevant predictors#

Let’s take a look at an example where K-nearest neighbors performs

worse when given more predictors to work with. In this example, we modified

the breast cancer data to have only the Smoothness, Concavity, and

Perimeter variables from the original data. Then, we added irrelevant

variables that we created ourselves using a random number generator.

The irrelevant variables each take a value of 0 or 1 with equal probability for each observation, regardless

of what the value Class variable takes. In other words, the irrelevant variables have

no meaningful relationship with the Class variable.

cancer_irrelevant[

["Class", "Smoothness", "Concavity", "Perimeter", "Irrelevant1", "Irrelevant2"]

]

| Class | Smoothness | Concavity | Perimeter | Irrelevant1 | Irrelevant2 | |

|---|---|---|---|---|---|---|

| 0 | Malignant | 0.11840 | 0.30010 | 122.80 | 0 | 1 |

| 1 | Malignant | 0.08474 | 0.08690 | 132.90 | 0 | 1 |

| 2 | Malignant | 0.10960 | 0.19740 | 130.00 | 1 | 0 |

| 3 | Malignant | 0.14250 | 0.24140 | 77.58 | 1 | 0 |

| 4 | Malignant | 0.10030 | 0.19800 | 135.10 | 1 | 0 |

| ... | ... | ... | ... | ... | ... | ... |

| 564 | Malignant | 0.11100 | 0.24390 | 142.00 | 0 | 0 |

| 565 | Malignant | 0.09780 | 0.14400 | 131.20 | 0 | 1 |

| 566 | Malignant | 0.08455 | 0.09251 | 108.30 | 1 | 1 |

| 567 | Malignant | 0.11780 | 0.35140 | 140.10 | 0 | 0 |

| 568 | Benign | 0.05263 | 0.00000 | 47.92 | 1 | 1 |

569 rows × 6 columns

Next, we build a sequence of K-NN classifiers that include Smoothness,

Concavity, and Perimeter as predictor variables, but also increasingly many irrelevant

variables. In particular, we create 6 data sets with 0, 5, 10, 15, 20, and 40 irrelevant predictors.

Then we build a model, tuned via 5-fold cross-validation, for each data set.

Fig. 6.9 shows

the estimated cross-validation accuracy versus the number of irrelevant predictors. As

we add more irrelevant predictor variables, the estimated accuracy of our

classifier decreases. This is because the irrelevant variables add a random

amount to the distance between each pair of observations; the more irrelevant

variables there are, the more (random) influence they have, and the more they

corrupt the set of nearest neighbors that vote on the class of the new

observation to predict.

Fig. 6.9 Effect of inclusion of irrelevant predictors.#

Although the accuracy decreases as expected, one surprising thing about Fig. 6.9 is that it shows that the method still outperforms the baseline majority classifier (with about 63% accuracy) even with 40 irrelevant variables. How could that be? Fig. 6.10 provides the answer: the tuning procedure for the K-nearest neighbors classifier combats the extra randomness from the irrelevant variables by increasing the number of neighbors. Of course, because of all the extra noise in the data from the irrelevant variables, the number of neighbors does not increase smoothly; but the general trend is increasing. Fig. 6.11 corroborates this evidence; if we fix the number of neighbors to \(K=3\), the accuracy falls off more quickly.

Fig. 6.10 Tuned number of neighbors for varying number of irrelevant predictors.#

Fig. 6.11 Accuracy versus number of irrelevant predictors for tuned and untuned number of neighbors.#

6.8.2. Finding a good subset of predictors#

So then, if it is not ideal to use all of our variables as predictors without consideration, how

do we choose which variables we should use? A simple method is to rely on your scientific understanding

of the data to tell you which variables are not likely to be useful predictors. For example, in the cancer

data that we have been studying, the ID variable is just a unique identifier for the observation.

As it is not related to any measured property of the cells, the ID variable should therefore not be used

as a predictor. That is, of course, a very clear-cut case. But the decision for the remaining variables

is less obvious, as all seem like reasonable candidates. It

is not clear which subset of them will create the best classifier. One could use visualizations and

other exploratory analyses to try to help understand which variables are potentially relevant, but

this process is both time-consuming and error-prone when there are many variables to consider.

Therefore we need a more systematic and programmatic way of choosing variables.

This is a very difficult problem to solve in

general, and there are a number of methods that have been developed that apply

in particular cases of interest. Here we will discuss two basic

selection methods as an introduction to the topic. See the additional resources at the end of

this chapter to find out where you can learn more about variable selection, including more advanced methods.

The first idea you might think of for a systematic way to select predictors is to try all possible subsets of predictors and then pick the set that results in the “best” classifier. This procedure is indeed a well-known variable selection method referred to as best subset selection [Beale et al., 1967, Hocking and Leslie, 1967]. In particular, you

create a separate model for every possible subset of predictors,

tune each one using cross-validation, and

pick the subset of predictors that gives you the highest cross-validation accuracy.

Best subset selection is applicable to any classification method (K-NN or otherwise). However, it becomes very slow when you have even a moderate number of predictors to choose from (say, around 10). This is because the number of possible predictor subsets grows very quickly with the number of predictors, and you have to train the model (itself a slow process!) for each one. For example, if we have 2 predictors—let’s call them A and B—then we have 3 variable sets to try: A alone, B alone, and finally A and B together. If we have 3 predictors—A, B, and C—then we have 7 to try: A, B, C, AB, BC, AC, and ABC. In general, the number of models we have to train for \(m\) predictors is \(2^m-1\); in other words, when we get to 10 predictors we have over one thousand models to train, and at 20 predictors we have over one million models to train! So although it is a simple method, best subset selection is usually too computationally expensive to use in practice.

Another idea is to iteratively build up a model by adding one predictor variable at a time. This method—known as forward selection [Draper and Smith, 1966, Eforymson, 1966]—is also widely applicable and fairly straightforward. It involves the following steps:

Start with a model having no predictors.

Run the following 3 steps until you run out of predictors:

For each unused predictor, add it to the model to form a candidate model.

Tune all of the candidate models.

Update the model to be the candidate model with the highest cross-validation accuracy.

Select the model that provides the best trade-off between accuracy and simplicity.

Say you have \(m\) total predictors to work with. In the first iteration, you have to make \(m\) candidate models, each with 1 predictor. Then in the second iteration, you have to make \(m-1\) candidate models, each with 2 predictors (the one you chose before and a new one). This pattern continues for as many iterations as you want. If you run the method all the way until you run out of predictors to choose, you will end up training \(\frac{1}{2}m(m+1)\) separate models. This is a big improvement from the \(2^m-1\) models that best subset selection requires you to train! For example, while best subset selection requires training over 1000 candidate models with 10 predictors, forward selection requires training only 55 candidate models. Therefore we will continue the rest of this section using forward selection.

Note

One word of caution before we move on. Every additional model that you train increases the likelihood that you will get unlucky and stumble on a model that has a high cross-validation accuracy estimate, but a low true accuracy on the test data and other future observations. Since forward selection involves training a lot of models, you run a fairly high risk of this happening. To keep this risk low, only use forward selection when you have a large amount of data and a relatively small total number of predictors. More advanced methods do not suffer from this problem as much; see the additional resources at the end of this chapter for where to learn more about advanced predictor selection methods.

6.8.3. Forward selection in Python#

We now turn to implementing forward selection in Python.

First we will extract a smaller set of predictors to work with in this illustrative example—Smoothness,

Concavity, Perimeter, Irrelevant1, Irrelevant2, and Irrelevant3—as well as the Class variable as the label.

We will also extract the column names for the full set of predictors.

cancer_subset = cancer_irrelevant[

[

"Class",

"Smoothness",

"Concavity",

"Perimeter",

"Irrelevant1",

"Irrelevant2",

"Irrelevant3",

]

]

names = list(cancer_subset.drop(

columns=["Class"]

).columns.values)

cancer_subset

| Class | Smoothness | Concavity | Perimeter | Irrelevant1 | Irrelevant2 | Irrelevant3 | |

|---|---|---|---|---|---|---|---|

| 0 | Malignant | 0.11840 | 0.30010 | 122.80 | 0 | 1 | 0 |

| 1 | Malignant | 0.08474 | 0.08690 | 132.90 | 0 | 1 | 0 |

| 2 | Malignant | 0.10960 | 0.19740 | 130.00 | 1 | 0 | 0 |

| 3 | Malignant | 0.14250 | 0.24140 | 77.58 | 1 | 0 | 0 |

| 4 | Malignant | 0.10030 | 0.19800 | 135.10 | 1 | 0 | 1 |

| ... | ... | ... | ... | ... | ... | ... | ... |

| 564 | Malignant | 0.11100 | 0.24390 | 142.00 | 0 | 0 | 0 |

| 565 | Malignant | 0.09780 | 0.14400 | 131.20 | 0 | 1 | 0 |

| 566 | Malignant | 0.08455 | 0.09251 | 108.30 | 1 | 1 | 0 |

| 567 | Malignant | 0.11780 | 0.35140 | 140.10 | 0 | 0 | 0 |

| 568 | Benign | 0.05263 | 0.00000 | 47.92 | 1 | 1 | 1 |

569 rows × 7 columns

To perform forward selection, we could use the

SequentialFeatureSelector

from scikit-learn; but it is difficult to combine this approach with parameter tuning to find a good number of neighbors

for each set of features. Instead we will code the forward selection algorithm manually.

In particular, we need code that tries adding each available predictor to a model, finding the best, and iterating.

If you recall the end of the wrangling chapter, we mentioned

that sometimes one needs more flexible forms of iteration than what

we have used earlier, and in these cases one typically resorts to

a for loop; see

the control flow section in

Python for Data Analysis [McKinney, 2012].

Here we will use two for loops: one over increasing predictor set sizes

(where you see for i in range(1, n_total + 1): below),

and another to check which predictor to add in each round (where you see for j in range(len(names)) below).

For each set of predictors to try, we extract the subset of predictors,

pass it into a preprocessor, build a Pipeline that tunes

a K-NN classifier using 10-fold cross-validation,

and finally records the estimated accuracy.

from sklearn.compose import make_column_selector

accuracy_dict = {"size": [], "selected_predictors": [], "accuracy": []}

# store the total number of predictors

n_total = len(names)

# start with an empty list of selected predictors

selected = []

# create the pipeline and CV grid search objects

param_grid = {

"kneighborsclassifier__n_neighbors": range(1, 61, 5),

}

cancer_preprocessor = make_column_transformer(

(StandardScaler(), make_column_selector(dtype_include="number"))

)

cancer_tune_pipe = make_pipeline(cancer_preprocessor, KNeighborsClassifier())

cancer_tune_grid = GridSearchCV(

estimator=cancer_tune_pipe,

param_grid=param_grid,

cv=10,

n_jobs=-1

)

# for every possible number of predictors

for i in range(1, n_total + 1):

accs = np.zeros(len(names))

# for every possible predictor to add

for j in range(len(names)):

# Add remaining predictor j to the model

X = cancer_subset[selected + [names[j]]]

y = cancer_subset["Class"]

# Find the best K for this set of predictors

cancer_tune_grid.fit(X, y)

accuracies_grid = pd.DataFrame(cancer_tune_grid.cv_results_)

# Store the tuned accuracy for this set of predictors

accs[j] = accuracies_grid["mean_test_score"].max()

# get the best new set of predictors that maximize cv accuracy

best_set = selected + [names[accs.argmax()]]

# store the results for this round of forward selection

accuracy_dict["size"].append(i)

accuracy_dict["selected_predictors"].append(", ".join(best_set))

accuracy_dict["accuracy"].append(accs.max())

# update the selected & available sets of predictors

selected = best_set

del names[accs.argmax()]

accuracies = pd.DataFrame(accuracy_dict)

accuracies

| size | selected_predictors | accuracy | |

|---|---|---|---|

| 0 | 1 | Perimeter | 0.891103 |

| 1 | 2 | Perimeter, Concavity | 0.917450 |

| 2 | 3 | Perimeter, Concavity, Smoothness | 0.931454 |

| 3 | 4 | Perimeter, Concavity, Smoothness, Irrelevant1 | 0.926253 |

| 4 | 5 | Perimeter, Concavity, Smoothness, Irrelevant1,... | 0.926253 |

| 5 | 6 | Perimeter, Concavity, Smoothness, Irrelevant1,... | 0.906955 |

Interesting! The forward selection procedure first added the three meaningful variables Perimeter,

Concavity, and Smoothness, followed by the irrelevant variables. Fig. 6.12

visualizes the accuracy versus the number of predictors in the model. You can see that

as meaningful predictors are added, the estimated accuracy increases substantially; and as you add irrelevant

variables, the accuracy either exhibits small fluctuations or decreases as the model attempts to tune the number

of neighbors to account for the extra noise. In order to pick the right model from the sequence, you have

to balance high accuracy and model simplicity (i.e., having fewer predictors and a lower chance of overfitting).

The way to find that balance is to look for the elbow

in Fig. 6.12, i.e., the place on the plot where the accuracy stops increasing dramatically and